Technical Specifications of Claude Opus 4.7

Technical specifications

| Item | Claude Opus 4.7 |

|---|---|

| Provider | Anthropic |

| API model ID | claude-opus-4-7 |

| Model family | Claude Opus |

| Input modalities | Text, image |

| Output modality | Text |

| Context window | 1M tokens |

| Max output tokens | 128K tokens |

| Thinking mode | Adaptive thinking (thinking: {type: "adaptive"}) Anthropic also introduced xhigh effort control |

| Best suited for | Complex reasoning, coding agents, vision-heavy workflows, long-horizon tool use |

| Latency | Moderate. Faster median latency than Opus 4.6 on coding tasks |

| Knowledge Cutoff | January 2026 (reliable); Training data cutoff January 2026 |

| Launch status | Generally available (April 16, 2026) |

What is Claude Opus 4.7?

Claude Opus 4.7 is the flagship Claude model for hard, multi-step work. Anthropic’s migration guide says it is highly autonomous and performs exceptionally well on long-horizon agentic work, knowledge work, vision tasks, and memory tasks. The same guide also notes that it supports the same major feature set as Claude Opus 4.6, including 1M-token context, 128K output tokens, adaptive thinking, prompt caching, batch processing, the Files API, PDF support, vision, and the full set of server-side and client-side tools.

Main Features of Claude Opus 4.7

- Adaptive Thinking: Automatically allocates more “thinking” time to complex problems while delivering fast responses to simpler ones.

- Advanced Agentic Capabilities: Excels at orchestrating multi-tool workflows, maintaining memory across sessions, sustaining long-running tasks, and recovering gracefully from errors.

- Production-Grade Coding: Plans carefully, operates reliably in large codebases, self-corrects, and delivers higher-quality code with fewer iterations.

- Enhanced Vision & Multimodal: 98.5% on XBOW visual-acuity benchmark (vs. 54.5% for Opus 4.6); improved interpretation of complex diagrams and chemical structures.

- Enterprise Reliability: Strong performance on spreadsheets, documents, slides, and multi-day projects with consistent context retention.

Performance Benchmarks of Claude Opus 4.7

Anthropic and third-party evaluations confirm Opus 4.7’s gains across coding, agentic workflows, visual reasoning, and enterprise tasks. Here are the headline numbers:

Here are the standout results:

- 93-task internal coding benchmark: +13% resolution rate over Opus 4.6; solved 4 tasks that neither Opus 4.6 nor Sonnet 4.6 could complete. Faster median latency and stricter instruction-following.

- CursorBench: 70% success (vs. 58% for Opus 4.6) — a meaningful jump in autonomous coding capabilities.

- Rakuten-SWE-Bench (production-level software engineering): Resolves 3× more tasks than Opus 4.6, with double-digit improvements in Code Quality and Test Quality.

- Internal research-agent benchmark (six modules): Tied for top score at 0.715 overall; best long-context consistency. General Finance module: 0.813 (vs. 0.767 for 4.6).

- Visual-acuity computer-use benchmark: 98.5% (vs. 54.5% for Opus 4.6).

- BigLaw Bench: 90.9% at high effort level.

- OfficeQA Pro: 21% fewer errors when referencing source material.

- Factory Droids & Bolt agentic workflows: 10–15% lift in task success; up to 10% better in best cases; 14% improvement at fewer tokens with one-third the tool errors.

Claude Opus 4.7 vs vs GPT5.4 vs Gemini 3.1 Pro

| Parameter | Claude Opus 4.7 | GPT-5.4 (incl. Pro/Thinking) | Gemini 3.1 Pro |

|---|---|---|---|

| Context Window | 1M tokens | ~1M tokens | 1M–2M tokens (varies by deployment) |

| Max Output Tokens | Up to 128K+ | High (supports long outputs) | 64K+ |

| Input/Output Support | Text + high-res image; text output | Text + image; native computer use | Native multimodal (text, image, video, audio) |

| Reasoning Modes | Adaptive Thinking (dynamic) | Thinking (low/high/xhigh effort) | Thinking/High effort modes |

| API Pricing (approx.) | $5 / $25 per M tokens | $2.50 / $15 per M tokens | Lower (~$2 / $10–12 per M tokens) |

| Release Date | April 16, 2026 | March 5, 2026 | February 19, 2026 |

| Key Strength | Agentic coding & reliability | Computer use & efficiency | Multimodal & broad reasoning |

These gains translate directly to fewer iterations, lower token spend, and higher reliability in production — critical for cost-conscious teams.

Vs. Claude Opus 4.6: Clear step-change—13% better coding resolution, 10–15% higher agentic success rates, dramatically improved vision, and stronger long-context consistency. Low-effort Opus 4.7 often matches or exceeds medium-effort 4.6 while using fewer tokens.

Vs. Competitors (as of April 2026 benchmarks):

- Faster than GPT-5.4 (xhigh) on CodeRabbit harness.

- Outperforms prior Claude models and rivals or exceeds GPT-5.4 and Gemini 3.1 Pro on agentic coding, tool-use consistency, and professional knowledge work.

- Maintains Anthropic’s leadership in nuanced writing, instruction-following, and safety calibration.

Opus 4.7 is positioned as the premier choice when maximum intelligence and reliability matter most; lighter models (Sonnet 4.6 or Haiku 4.5) remain preferable for speed or cost-sensitive workloads

How to Access Claude Opus 4.7 via CometAPI

CometAPI, a leading AI model aggregator, provides seamless, OpenAI-compatible access to Anthropic’s latest models, including Opus 4.7 (model identifier typically follows the pattern anthropic/claude-opus-4-7 or the official alias).

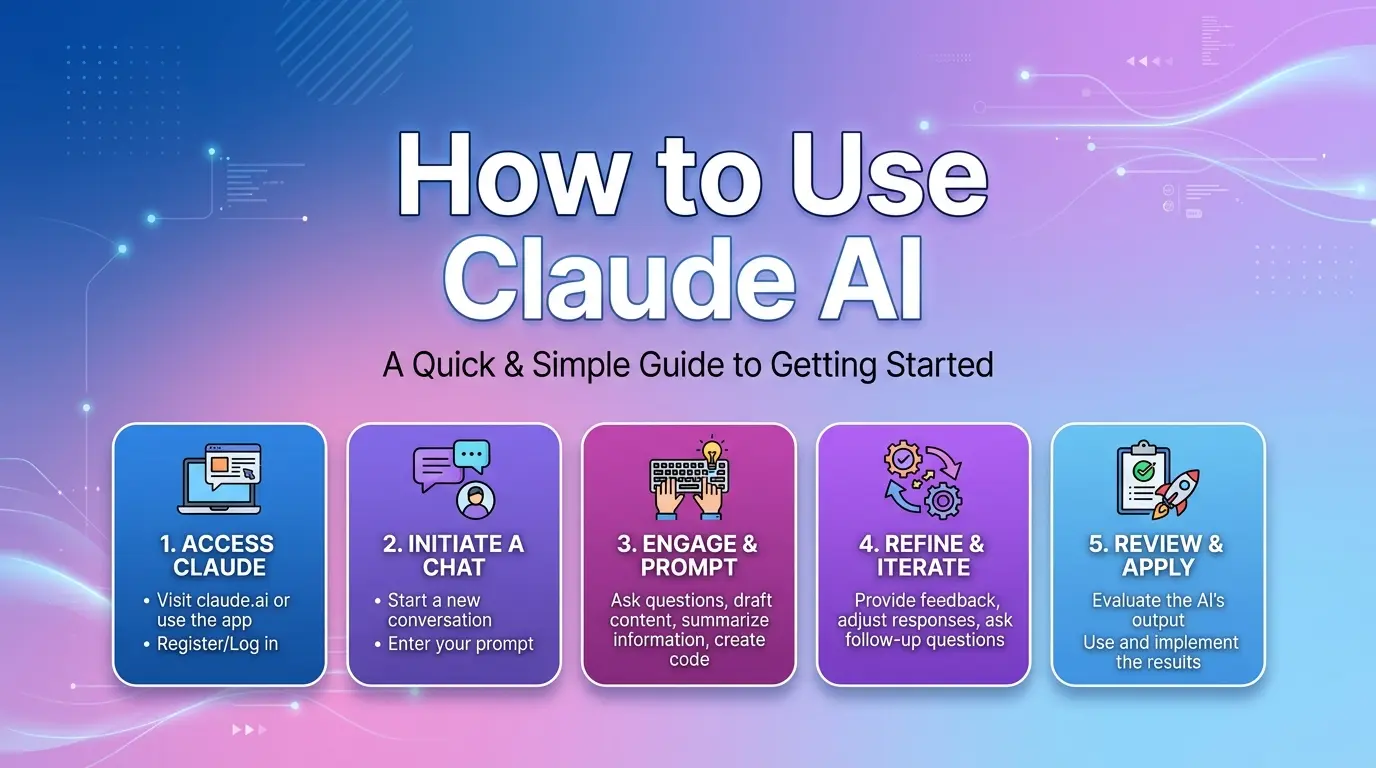

How to access and use Claude Opus 4.7 API

Step 1: Sign Up for API Key

Log in to cometapi.com. If you are not our user yet, please register first. Sign into your CometAPI console. Get the access credential API key of the interface. Click “Add Token” at the API token in the personal center, get the token key: sk-xxxxx and submit.

Step 2: Send Requests to Claude Opus 4.7 API

Select the “claude-opus-4-7” endpoint to send the API request and set the request body. The request method and request body are obtained from our website API doc. Our website also provides Apifox test for your convenience. Replace <YOUR_API_KEY> with your actual CometAPI key from your account. Where to call it: Anthropic Messages format and Chat format.

Insert your question or request into the content field—this is what the model will respond to . Process the API response to get the generated answer.

Step 3: Retrieve and Verify Results

Process the API response to get the generated answer. After processing, the API responds with the task status and output data.Enable features such as streaming, prompt caching, or long-context handling via standard parameters.

Why Choose CometAPI?

CometAPI delivers the same underlying Claude Opus 4.7 model as the official Anthropic API but at significantly lower effective cost through smart routing, global infrastructure, bulk purchasing, and enterprise-grade proxies. Developers and enterprises gain:

- Cost Savings: Substantially lower per-token rates than direct Anthropic pricing ($5/$25 per million tokens), with volume discounts and optimized routing that can reduce expenses by a meaningful margin while maintaining performance.

- Unified Access: One OpenAI-compatible endpoint for 500+ models across providers (OpenAI, Anthropic, Google, xAI, etc.), enabling effortless model swapping and A/B testing.

- High Reliability: Intelligent primary/backup routing and multi-region servers minimize downtime.

- Developer-Friendly Features: Usage analytics, response visualization, comparative testing tools, lightweight SDKs, and no data retention for privacy.

- Seamless Production Integration: Supports long-context features, streaming, caching, and Claude-specific capabilities without rewriting code.

In practice, teams using CometAPI for previous Opus versions (4.6 and earlier) report faster iteration, lower operational costs, and identical model quality—making it the preferred gateway for scalable, budget-conscious deployment of frontier models like Claude Opus 4.7

.webp)